A Practical Guide to Becoming an AI Product Manager

How to figure out which type of AI PM you want to be, and what to actually prioritize to get there.

👋 I’m Diego. In every article, I document my journey exploring AI and Product Management. I share the hard lessons I’ve learned from my latest “side quests,” report back on what’s actually working, and answer your questions about building in this space.

Search “how to become an AI Product Manager” and you’ll find 50 articles saying the same five things: learn ML basics, build a side project, take a Coursera course, network, tailor your resume.

You maybe even did some of that. You took a course. You built a small project. But you’re still stuck, because the advice was incomplete.

Nobody told you that “AI Product Manager” covers a spectrum of fundamentally different roles, each requiring different skills, different preparation, and different evidence. Preparing for the wrong type of AI PM role is worse than not preparing at all. You end up building the wrong muscles entirely.

Senior AI PM roles at big tech companies regularly clear $300K or more in total compensation when you factor in equity and bonuses, with some staff-level positions pushing well past $500K. The demand is real. The path to getting there is where most people get lost.

One thing worth getting straight early: there’s a difference between using AI tools to be a better PM and actually building AI products.

Using NotebookLM to organize your research makes you more efficient. Deciding whether an AI agent should explain its reasoning to a user, designing what happens when it gets something wrong, and defining the evals (the systematic tests that measure whether the AI performs well enough to ship) to measure trust? That’s AI product management.

The gap between the two is where most transitioning PMs get stuck.

In this article, I’ll break down the AI PM spectrum based on what I’ve seen across my career (from building ML models at Microsoft to leading AI product experiences at Google), what hiring managers are actually looking for, and what the job postings really mean when you read between the lines.

The AI PM Spectrum

There are at least three distinct types of AI Product Managers, and they sit on a spectrum from deeply technical to experience-driven. The skills, preparation, and evidence required for each are fundamentally different. Let me walk you through each one.

AI Experiences PM

You build user-facing products powered by AI. Your job is the experience layer: how users interact with AI capabilities, trust them, and get value from them. You don’t build the models. You take model capabilities and turn them into products people love and trust.

Most AI Experiences PMs come from traditional product management backgrounds. If you’re a PM today working on consumer or enterprise products, this is likely the natural transition path for you.

You already know how to define problems, prioritize roadmaps, and ship products. What changes is the nature of what you’re shipping and the product decisions you have to make around it.

In practice, this is what the role looks like in real job postings:

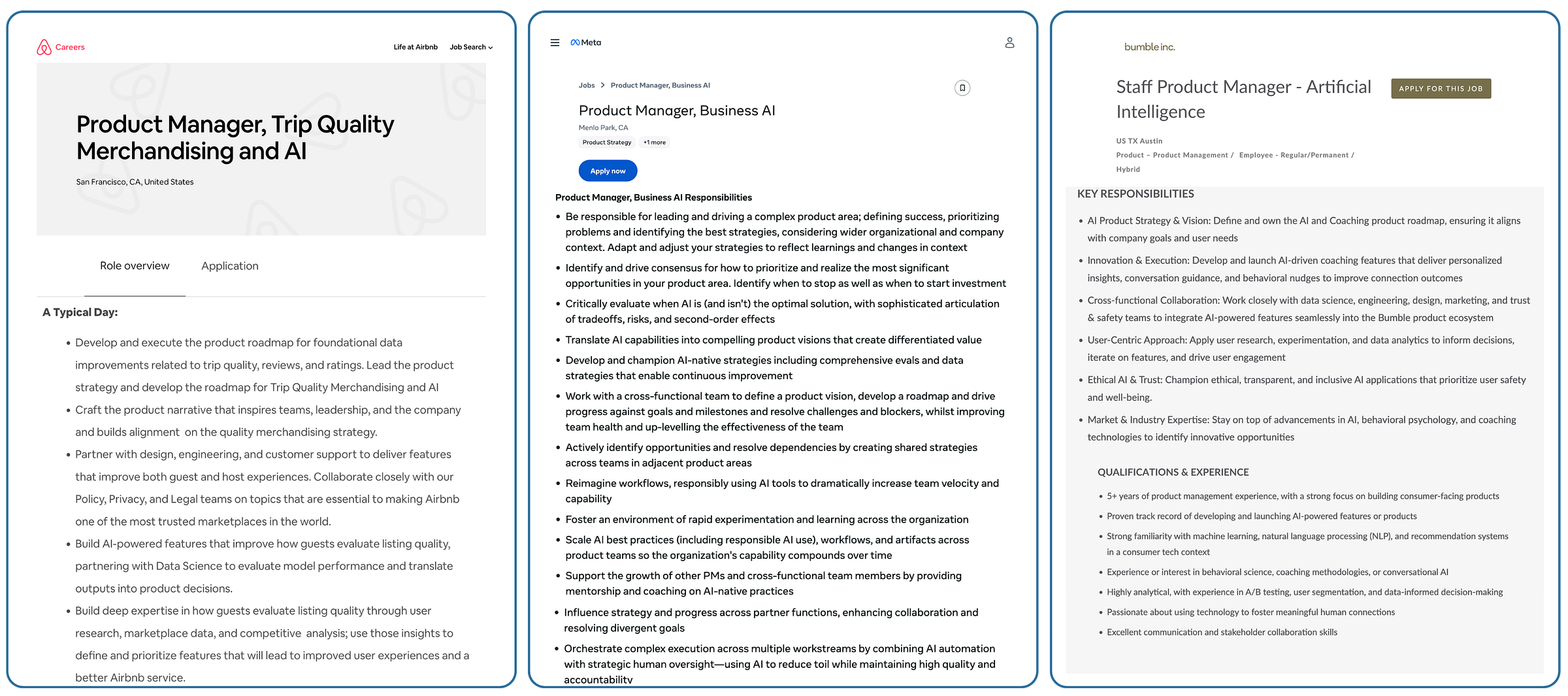

Airbnb, PM - Trip Quality Merchandising & AI ($224K-$280K, 10+ years): The role uses AI to help guests evaluate and choose listings. The PM partners with Data Science to evaluate model performance and translate model outputs into product decisions.

Meta, PM - Business AI ($173K-$241K + bonus + equity, 8+ years): This PM builds AI agents across WhatsApp, Messenger, and ads. The posting explicitly states that the PM must “critically evaluate when AI is and isn’t the optimal solution, with sophisticated articulation of tradeoffs, risks, and second-order effects”.

Bumble, Staff PM - AI (5+ years): A consumer-facing role building AI-powered features for personalization and user experience. The posting asks for experience “developing and launching AI-powered features or products” and familiarity with machine learning and recommendation systems in a consumer context.

Notice what these postings are asking for. They’re not asking you to train models, build data pipelines, or optimize how fast a model runs. They’re asking you to make product decisions about AI: when to use it, how to design around it, and how to translate its outputs into something users actually trust and act on.

My experience as an AI Experiences PM

At Google, I lead Enterprise Data Science Notebooks for data scientists, and part of my work is integrating AI to solve their pain points.

We launched a Data Science Agent that automates end-to-end data science workflows: it takes a user’s prompt, generates a multi-step plan covering data loading, exploration, model training, and evaluation, then executes the code and reasons about the results.

I didn’t build the underlying models or the AI platform. My job was to take those AI capabilities and create the full product experience around them. That meant answering questions like:

How would users discover this agent inside an already complex product?

How would they trust its output, especially when it’s generating and executing code on their behalf?

How do we surface errors when the agent gets something wrong, and what does recovery look like?

What’s the experience when things go well vs. when they go wrong?

What evals do we need to run before going live, and how do we keep iterating on quality after launch?

Every one of those is a product decision. And every one requires understanding how AI behaves in the real world: when it works, when it doesn’t, and what your users need to feel confident using it.

That work doesn’t end at launch either. We keep running evals, updating to new models, and refining how the agent communicates what it’s doing. AI products have a longer tail than traditional features because the model’s behavior can shift and user expectations evolve.

The common mistake I see is PMs who approach AI integration like any other feature launch: add AI to a pain point, ship it, move on. AI is probabilistic, and it will sometimes be wrong even when everything is working as designed. If you haven’t designed the experience around that reality from day one, users will lose trust fast and won’t come back.

You don’t need an engineering background or a CS degree for this path. The technical bar here is about understanding AI behavior and making sound product decisions around it, not about writing code or building models. What you need is AI Product Sense, a skill I’ll break down after we cover the rest of the spectrum.

AI Builder PM

This is a fundamentally different job. You build the AI/ML infrastructure, platforms, and models that other teams consume. You’re the PM for the engine that powers AI features across an entire company. You work alongside data scientists and ML engineers daily, and you need to speak their language fluently.

Where an AI Experiences PM asks “how should users interact with this AI feature?”, an AI Builder PM makes decisions like:

Three product teams need AI capabilities and you have bandwidth for one. Which do you prioritize, and how do you make that call?

Your data science team wants to use a more powerful model, but it’s nearly impossible to explain to users. Do you ship it or push for something more interpretable?

Engineering wants to build a custom model from scratch. An off-the-shelf option gets you 80% of the way there. What do you recommend and why?

The platform is stable, but multiple teams are requesting new features. Do you invest in new model development, better observability tools, or automatic retraining? How do you decide?

Here’s what these roles look like in job postings:

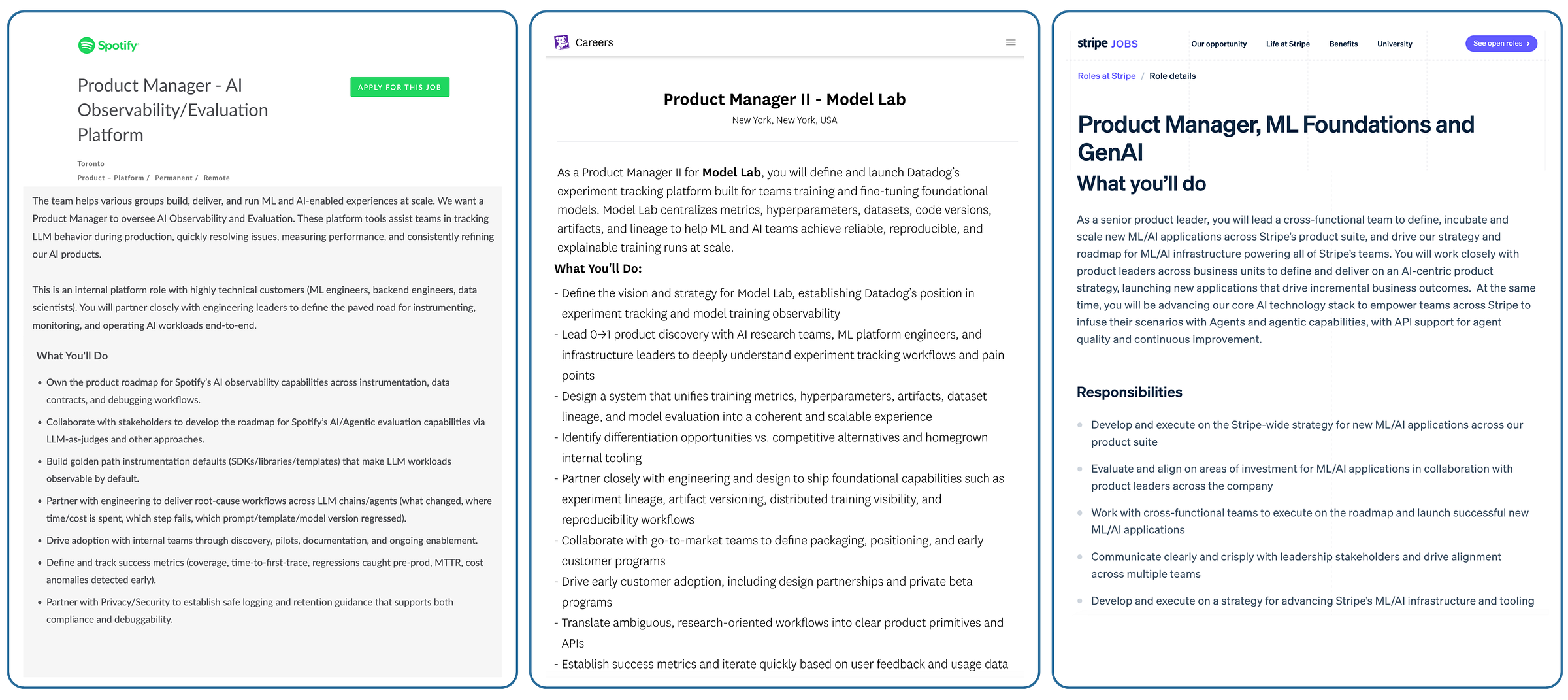

Spotify, PM - AI Observability/Evaluation Platform: An internal platform role where the PM owns the roadmap for tools that help teams track how AI models behave in production, debug issues, and measure performance. The posting asks for familiarity with LLM evaluation approaches like golden datasets (curated test sets used to benchmark model quality) and LLM-as-a-judge (using one AI model to evaluate another).

Datadog, PM II - AI Agent Console (3+ years, ~$155K-$190K base + equity): A zero-to-one product launch building Datadog’s solution for monitoring and managing AI agents across customer environments. Despite the relatively junior experience requirement, the posting asks for technical fluency in LLM and agent frameworks like LangGraph and MCP Servers.

Stripe, PM - ML Foundations and GenAI (7+ years): This PM leads the strategy for new ML/AI applications across Stripe’s entire product suite while also advancing the core AI infrastructure that powers all of Stripe’s teams. The posting requires a solid understanding of ML and applied AI tech stacks.

Notice how different these are from the AI Experiences PM postings. The Spotify role is entirely internal-facing, building tools for ML engineers. The Stripe role requires you to define a company-wide AI strategy and advance core infrastructure.

These PMs aren’t designing what end users see. They’re building what makes AI features possible in the first place.

My experience as an AI Builder PM

Before Google, I was an AI Builder PM at Microsoft. I worked in a horizontal team that built AI models for other Microsoft product teams.

My daily work involved the full data science lifecycle: understanding how models are selected and trained, what the data going in looks like, what the metrics coming out actually mean, and what happens when models need to be retrained.

I worked alongside data scientists every day. My counterpart PMs on the consuming teams had some idea about ML, and I was the person who filled in the gaps. I helped translate data science decisions into product experience decisions for their teams and ultimately for our users.

One part of this job that nobody talks about: staying current with the research. My data scientists would share white papers among themselves, and while nobody asked me to read them, I started doing it anyway. It helped me follow their conversations, understand their tradeoffs, and anticipate how new techniques would affect the product.

Some of our projects used well-understood models like churn prediction. Others involved deep learning, where the model is significantly more powerful but nearly impossible to explain to a user.

Understanding those tradeoffs well enough to help balance the tension between model power and user trust was a core part of the job.

We built a churn prediction model for a Microsoft Business Application CRM. Customers would bring their data, and we’d predict whether their users were likely to churn.

The model worked. The data scientists delivered strong metrics. But customers didn’t care about precision or recall numbers. They wanted to know: Is this customer going to churn? Why? And how confident are you?

So we simplified the output to a high/medium/low score and showed the top factors contributing to the prediction. That meant pushing our data science team to carefully review what the model used as input features, because those features had to make sense to a non-technical user.

When the model wasn’t confident enough, we didn’t show a prediction at all, and we explained to the user why. Every one of those was a PM decision.

What the technical bar actually looks like

This path requires genuine technical depth. I didn’t write the code, but I had to understand:

What models we were using and why we chose them over alternatives

What the training process looked like and what happens when you feed it bad data

What metrics like precision, recall, and ROC-AUC actually mean for the product

How model drift works and the pipeline implications of needing to retrain

How to help partner PMs understand what AI can and can’t do for their product

What new research meant for our roadmap, and when a more powerful technique introduced tradeoffs (like explainability) that could hurt the user experience

If you’re serious about the Builder path, start with free ML courses on Kaggle or Stanford’s free coursework. And I want to stress: take a real theory course about how ML actually works. A prompt engineering class won’t prepare you for this. You need to understand the math, the training process, and why models behave the way they do.

If you love that technical depth, consider going deeper through a rigorous program. I personally did Georgia Tech’s Online MSCS specialized in AI after starting with Kaggle, and that level of investment made a real difference.

If you don’t enjoy the math and theory, that’s a strong and useful signal. This path might not be for you, and that’s completely fine. The AI Experiences PM path is equally valid and often equally well-compensated.

These roles are commonly found at companies building core AI infrastructure like OpenAI, Anthropic, and Google DeepMind, as well as platform teams at most companies, including Apple, Netflix, Spotify, Stripe, Datadog, etc.

AI Enhanced PM

The third point on the spectrum is worth naming, but this one is a skill, not a role.

An AI Enhanced PM uses tools like ChatGPT, Claude Code, Lovable, v0, NotebookLM, Perplexity, and Google AI Studio to be more productive at their existing PM work. Drafting PRDs faster, organizing research, building quick prototypes, analyzing user feedback at scale. They’re using AI to do their current job better and faster.

Everyone should be doing this, regardless of whether you’re pursuing an AI PM career or not.

The risk is thinking that because you use AI tools daily, you’re prepared for AI PM roles. Using AI and building AI products are different disciplines with different preparation. Confusing the two is one of the most common mistakes in this transition.

Being AI-enhanced is your baseline. The career move is choosing between Experiences and Builder.

For practical guides on becoming an AI Enhanced PM right now, I’ve written about building an AI PRD Engine and a practical guide to RAG for PMs.

AI Product Sense: The Skill That Actually Matters

Now that you know the spectrum, here’s the skill that separates AI PMs from PMs who happen to use AI. This applies to both AI Experiences PMs and AI Builder PMs, though it shows up differently in each role.

AI Product Sense is the practical intuition that lets you make good product decisions when your product’s core behavior is probabilistic.

There are three principles at its core.

Principle 1: Plan for the failure, not just the feature.

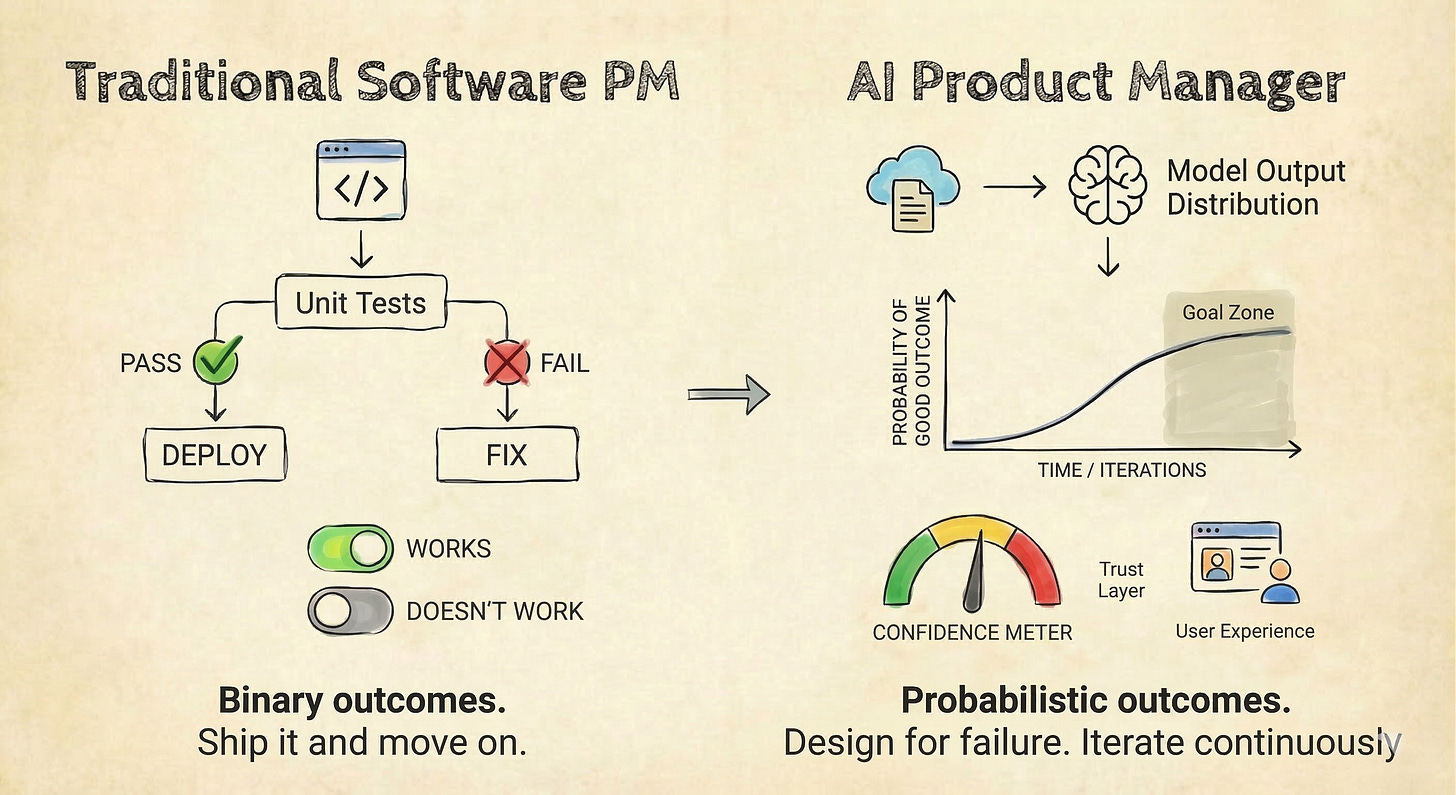

Traditional software either works or it doesn’t. A button click either triggers the right action or it’s a bug you can fix.

AI products work most of the time. And that “most of the time” changes everything about how you operate as a PM.

Your success metrics change. You can’t just measure “does this feature work?” You have to measure how often it works, how badly it fails when it doesn’t, and whether users can recover gracefully.

Your testing approach changes. You need evals running continuously, not just a QA pass before launch.

Your UX patterns change. You have to design for the failure case with as much care as you design for the success case.

This is the biggest mental shift for PMs coming from traditional software.

At Google, our Data Science Agent required evals before launch and it still requires them today. The model’s behavior shifts, users find new edge cases, and the bar for “good enough” keeps moving. If you treat an AI feature like a shipped-and-done product, you’ll be caught off guard.

What this means for your transition: When you’re building your AI project (more on that in the next section), don’t just build the happy path. Design what happens when the AI gets it wrong. That’s the signal hiring managers are looking for.

Principle 2: The best AI PMs say “no” to AI more often than they say “yes”.

Anyone can plug in an API. The hard part is knowing when AI actually solves the problem better than a simpler approach, and having the conviction to push back when leadership wants AI everywhere.

I wrote about this in my article on filtering AI noise, where I shared how leadership at a previous company treated the checkout page like an AI piñata. Everyone was throwing AI at every corner of the screen. Nobody asked whether an LLM actually made checkout faster or safer. Nothing from that meeting shipped.

That pattern is common. Executives demand AI. PMs scramble to show progress. Nobody asks whether AI is the right solution for the actual problem. Meta’s Business AI job posting gets this right. It explicitly asks PMs to “critically evaluate when AI is and isn’t the optimal solution”.

What this means for your transition: When you build your AI project, be ready to articulate why you chose AI over a simpler approach. “I used AI because it’s cool” will lose you credibility. “I tested a rules-based approach first, and here’s why AI performed better for this specific problem” will earn it.

Principle 3: Your job is to translate model output into human trust.

A model that’s 95% accurate but that users don’t trust is worthless. How you surface results, explain confidence, handle errors, and build trust over time is the core of what AI PMs do.

At Microsoft, we built a churn prediction model that delivered strong technical metrics. But our users didn’t care about the metrics.

They needed a clear confidence level they could act on, they needed to understand why the model predicted what it predicted, and they needed to know when we couldn’t show a prediction at all. Every one of those product decisions shaped whether users trusted the output or ignored it.

What this means for your transition: When you evaluate AI products (or build your own), pay attention to the trust layer. How does the product explain itself? How does it handle low confidence? How does it recover from errors? That’s AI Product Sense in action, and it’s the kind of thinking you want to demonstrate in interviews and in your work.

What Hiring Managers Are Looking For

Beyond the job postings, what do hiring managers actually care about when they evaluate AI PM candidates?

After years of working in AI product management and seeing how teams hire for these roles, one pattern stands out: the PMs who get hired are the ones who have built something with AI. Not managed it. Not written specs about it. Built it.

Hiring managers want to see that you’ve gotten your hands dirty, that you understand AI’s limitations from first-hand experience, and that you can translate that understanding into product decisions. Listing “AI/ML knowledge” on your resume won’t get you there. Showing what you’ve shipped will.

What To Do Now

Here’s the actionable part. I’m going to focus primarily on the AI Experiences PM path since that’s where most transitioning PMs will land, and I’ll note where the advice differs for the Builder path.

Step 1: Build and ship something publicly.

Not a Kaggle project. Not a tutorial you followed. An actual product that solves a real problem using AI.

Use tools like Lovable, Claude Code, Google AI Studio, Perplexity, NotebookLM, or Nano Banana. The goal here is to prove you have AI Product Sense: you identified a real problem, decided AI was the right solution (and can explain why), designed for failure modes, and shipped something real that people can use.

For the Builder path, the bar is different. Build something that demonstrates systems-level thinking: data pipelines, model selection, evaluation. Show that you understand the full lifecycle.

Step 2: Build in public.

Share your process, your learnings, your failures. Write about it. Post about it. Let people see how your brain works.

Hiring managers are looking for signals beyond the resume, and the PMs who share their thinking publicly create a trail of evidence that no resume bullet point can match.

Step 3: Use what you built as your career evidence.

This is the part most guides skip. Building the project is not the end goal. The project becomes your proof.

Jaclyn Konzelmann, Director of Product Management at Google, put it simply in her latest advice to aspiring AI PMs: she looks for words like “prototyped”, “built”, and “deployed” on resumes, and she wants to see familiarity with tools like Google AI Studio, Lovable, Claude Code, and Cursor.

In her words, “If you want to become an AI PM, start building. Create Opals, make AI-powered videos, write about your process. The proof is in the pudding.”.

Your project feeds directly into everything that matters for your transition:

Your resume. You now have a line item that says “built” and “shipped”, not just “managed” and “defined”. You can link to it. You can show it.

Your interview stories. Every behavioral question (”tell me about a time you shipped something”, “how did you handle ambiguity”, “describe a technical tradeoff you navigated”) can draw from this project. You lived it. You made real decisions about when AI was the right solution, how to handle edge cases, and what to cut.

Your portfolio and online presence. A PM who has a live product, a blog post about why they built it, and a clear articulation of the AI tradeoffs they navigated stands out from a PM who lists skills on a resume.

Your product sense demonstration. If you’re asked to deconstruct an AI product or pitch a 0-to-1 idea, you can draw from something you actually built rather than theorizing in the abstract.

The project is not a checkbox. It’s the foundation for your entire transition narrative.

Step 4: Sharpen your AI Product Sense by using AI products as a PM.

Start using AI products with a PM lens. For every AI feature you encounter, ask yourself:

What works about this experience? What fails?

Why did they design it this way?

How are they handling trust, errors, and edge cases?

What would I change, and why?

This habit compounds over time. It gives you a vocabulary and an intuition that shows up naturally in interviews, in conversations with hiring managers, and in the way you think about your own products.

Step 5: Get the right education for your path.

For AI Experiences PMs: You don’t need a degree in ML. Your education comes through building and shipping. Build projects with tools like Lovable and Claude Code, write about what you learn, share your work publicly, and engage with the community. The combination of building, writing, and sharing is what develops your product sense and makes you visible to hiring managers.

For AI Builder PMs: Start with free ML theory courses on Kaggle or Stanford. If you love the math and theory, go deeper. I did Georgia Tech’s Online MSCS specialized in AI, and that level of investment made a real difference. If you don’t enjoy the technical depth, the Experiences path is a better fit.

And regardless of which path you choose: Be an AI Enhanced PM right now.

Use AI tools in your daily work today. ChatGPT, Claude Code, Lovable, v0, NotebookLM, Perplexity, Google AI Studio. Start building that muscle now while you prepare for the transition.

Back to the Main Quest

Today’s side quest was about cutting through the noise around becoming an AI PM and figuring out what the role actually requires.

The short version: “AI PM” is a spectrum. AI Experiences PMs build user-facing products powered by AI. AI Builder PMs build the infrastructure and models behind them. AI Enhanced PMs use AI to work better, and that’s a skill everyone should have but not a career path on its own.

The worst thing you can do is prepare generically. Figure out where on the spectrum you want to land, and go deep on what actually matters for that role.

Safe travels on your Main Quest this week.

Party up! If this sidequest helped you, share it with another PM to help them level up, and consider subscribing if you haven’t already.

See you in the next Side Quest 👋,

Diego

This is a really clear distinction, thanks for sharing this, Diego. I had a follow-up question. You mentioned using different prototyping tools to clearly demonstrate where and why AI is being used. If I build something like a subscription management app using tools like Lovable, where I am mainly using AI to prototype faster rather than solving the core problem with AI, would that still count?

I am trying to understand what kinds of projects would best showcase AI thinking, especially for someone who isn’t directly working on AI in their current role but wants to build a strong portfolio on the side. Would love to hear examples of what that could look like.

Hey Diego, I'm a fellow student on your course! I'm looking for a job in AI PM. Do you think the one-page CV is still valid even after ten years of exp?