A framework for filtering AI noise

Why 95% of AI pilots fail, why we feel overwhelmed by AI, and a simple 2-path framework to protect your product roadmap.

👋 I’m Diego. In every article, I document my journey exploring AI and Product Management. I share the hard lessons I’ve learned from my latest “sidequests,” report back on what’s actually working, and answer your questions about building in this space.

Last week, I was scrolling Reddit and came across this post: “PM feeling left behind by AI trends—what do you suggest?”

I felt attacked. I was in the same boat.

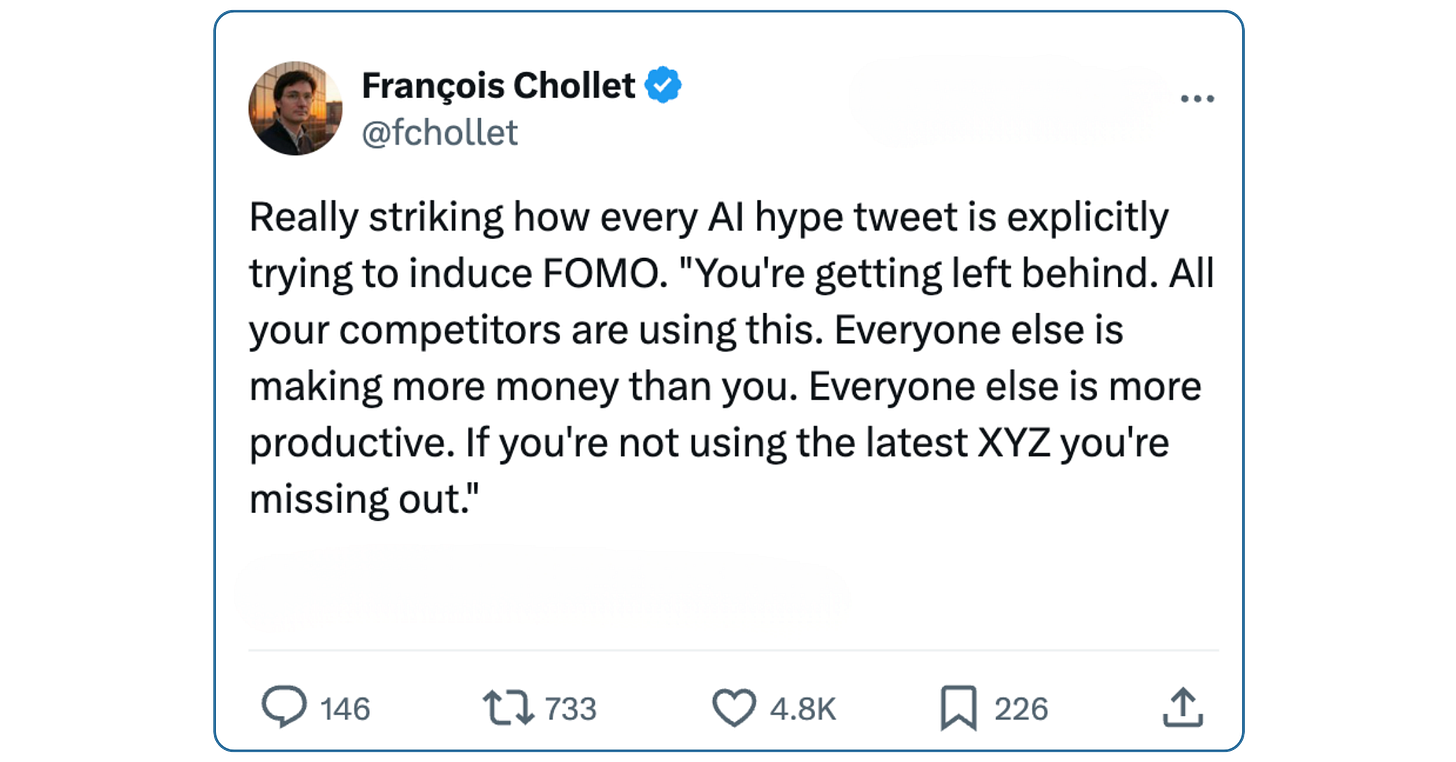

At the end of 2025, I found myself up at 2:00 AM repeatedly bookmarking “2025 AI Must-Knows,” agent frameworks, and frontier model drops for later. My inbox was a graveyard of newsletters screaming: “FOMO: this new AI tool will replace you. For real this time.”

Spoiler: “Later” never came. I ended up ignoring it all and burning out.

If you’ve felt this way, you are not alone. And more importantly, it’s not your fault. Here is how I finally broke the cycle—not by adding 10 more AI tools to my stack, but by learning exactly what to ignore.

It’s Not You. It’s the Math of 2025.

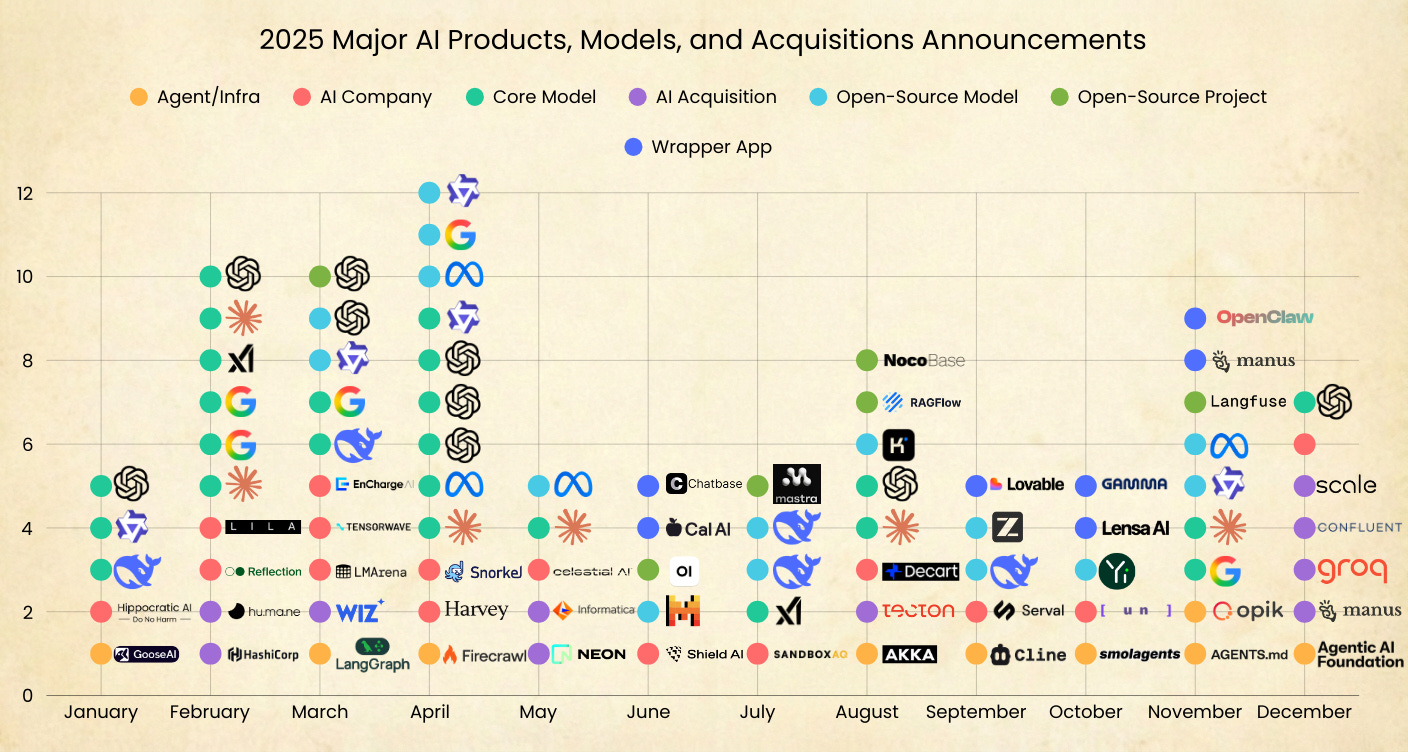

This graph nails it — These are some of the major AI shifts in 2025. (Plenty more existed for a hot minute and still clogged my feed.)

I tried tracking it all. But looking at the numbers, it was mentally exhausting:

The Funding: In 2025 alone, we saw a record $44 billion in venture capital poured into generative AI globally.

The pace: In a six-week sprint, Google launched Gemini 3, Anthropic followed with Claude Opus 4.5 a week later, and OpenAI pushed out GPT‑5.2 in what insiders described as a ‘code red’ response.

The Noise: “Vibecoding” apps hit massive valuations, and our feeds were clogged with a never-ending stream of wrapper apps.

AI was so popular that Collins Dictionary actually named “vibe coding” their word of the year. Meanwhile, Merriam-Webster went with “slop”—defined as inferior digital content generated by AI.

We were drowning in AI announcements and probabilistic slop. Naturally, our brains tapped out.

The Psychology of the 2:00 AM Scroll

Staring at my endless list of unread bookmarks, I realized my avoidance wasn’t laziness. It was a biological crash.

Educational psychologists call this Cognitive Overload. Back in 1956, cognitive psychologist George A. Miller outlined what is now known as “Miller’s Law”. It states that the average human working memory can only hold about 7 (plus or minus 2) pieces of information at a time.

So what happens to a PM today?

In addition to everything else you already have to do as a PM…

Your Slack pings with a new OpenAI drop.

Your CEO/VP asks for a breakdown of agentic workflows.

Your backlog has 40 probabilistic edge cases you don’t know how to test.

Our working memory vastly exceeds its processing capacity. The prefrontal cortex, which is responsible for our executive functions, becomes completely overtaxed. When the brain is flooded with excessive digital input, it defaults to avoidance, endless scrolling, and stress spikes.

My 50 open tabs weren’t a sign of falling behind; they were a symptom of a systemic overload.

If your brain shuts down on the fifth “game-changing agent framework” thread of the day, give yourself some grace. Cognitive overload is doing exactly what research predicts.

The Executive Mandate: Hitting the “AI Piñata”

Science explained my paralysis. But as Product Managers, the existential dread of the news cycle is only half the battle. We also face a vicious professional burden.

My personal wake-up call happened during my brief time as an AI PM at PayPal (pre-Google). In my very first product meeting, the team was eyeing PayPal’s checkout page like it was an AI piñata.

The ideas were flying: “Put an AI autocomplete here. Add a predictive upsell there. Let’s do generative summaries everywhere”.

They were throwing AI at every corner of the screen, straight pasta-to-the-wall style. Nobody paused to ask the fundamental Product Management questions:

“What problem are we actually solving?”

“For whom?”

“Does an LLM make this checkout faster or safer?”

It was pure leadership pressure: Show AI pilots or get left behind. The fundamentals went out the window. AI was the hammer, and every user flow was a nail. (Spoiler: Nothing from that meeting actually shipped—except for the slide deck I was asked to make for the VPs).

This wasn’t just PayPal weirdness. Throughout 2025 and into 2026, PMs have been pressured by a vicious professional burden: Executive leadership demanding miracles, but providing zero ML runway.

The Reality: 95% of Generative AI Pilots Fail

Execs want demos. So we hack together a shiny prototype.

Overnight, the industry converted thousands of Product Managers into “AI (GenAI) PMs.” They know how to build great software. But what happens when you are suddenly forced to ship a probabilistic AI feature by Friday—with zero time to learn how the underlying models actually work or how to evaluate it?

You get the exact state of the industry today.

MIT recently analyzed over 300 enterprise AI deployments for its State of AI in Business report. The results are brutal: 95% of GenAI pilots deliver zero P&L impact. Why? Because the sheer pressure to launch forces Product Teams to skip the unsexy fundamentals.

Instead of writing rigorous evaluation criteria (Evals) to test how an LLM handles edge cases, we default to “Vibe-Based QA.” Here is what that looks like in practice:

We chat with the bot a few times.

We check if the output “feels” right.

If the vibe is good and the CEO likes the summary, it ships.

Real PMs are screaming about this exact trap online. Take this thread from r/ProductManagement. It captures the dual-sided dysfunction between PMs and leadership right now:

The OP (A Mid-Level PM): Shared that they were trying to push their engineering team to stay on top of the latest “LLM wrappers” best practices and experiment more with AI. But they also highlighted the massive red flag from leadership:

“For eg, I have been pushing for AI Evals for a long time and nobody seems to think its important at this pilot stage”.

The Top Comment (A Veteran PM): Delivered a necessary reality check about trying to force AI into a product:

“Focusing on Evals, wrappers, and ML best practices before defining what problem you’re solving isn’t product management. It’s AI tool worship. Demanding experimentation [without a core use case] is Product Malpractice”.

That exchange is the 2025 AI gold rush in a nutshell. PMs are desperately trying to shove “wrappers” into their roadmaps, while leadership actively ignores the boring safety checks (Evals) because they just want the shiny pilot shipped.

When you combine those two forces, you get a recipe for disaster.

If you launch an AI feature without guardrails, the best-case scenario is that your users immediately notice the slop and complain. It damages trust, and it’s embarrassing, but at least the failure is loud. You know it’s broken, and you can fix it.

The much more terrifying scenario? When the AI’s hallucinations are so beautifully formatted and plausible that nobody thinks to question them.

That is how you get a silent, catastrophic failure.

The 3-Month Hallucination Disaster

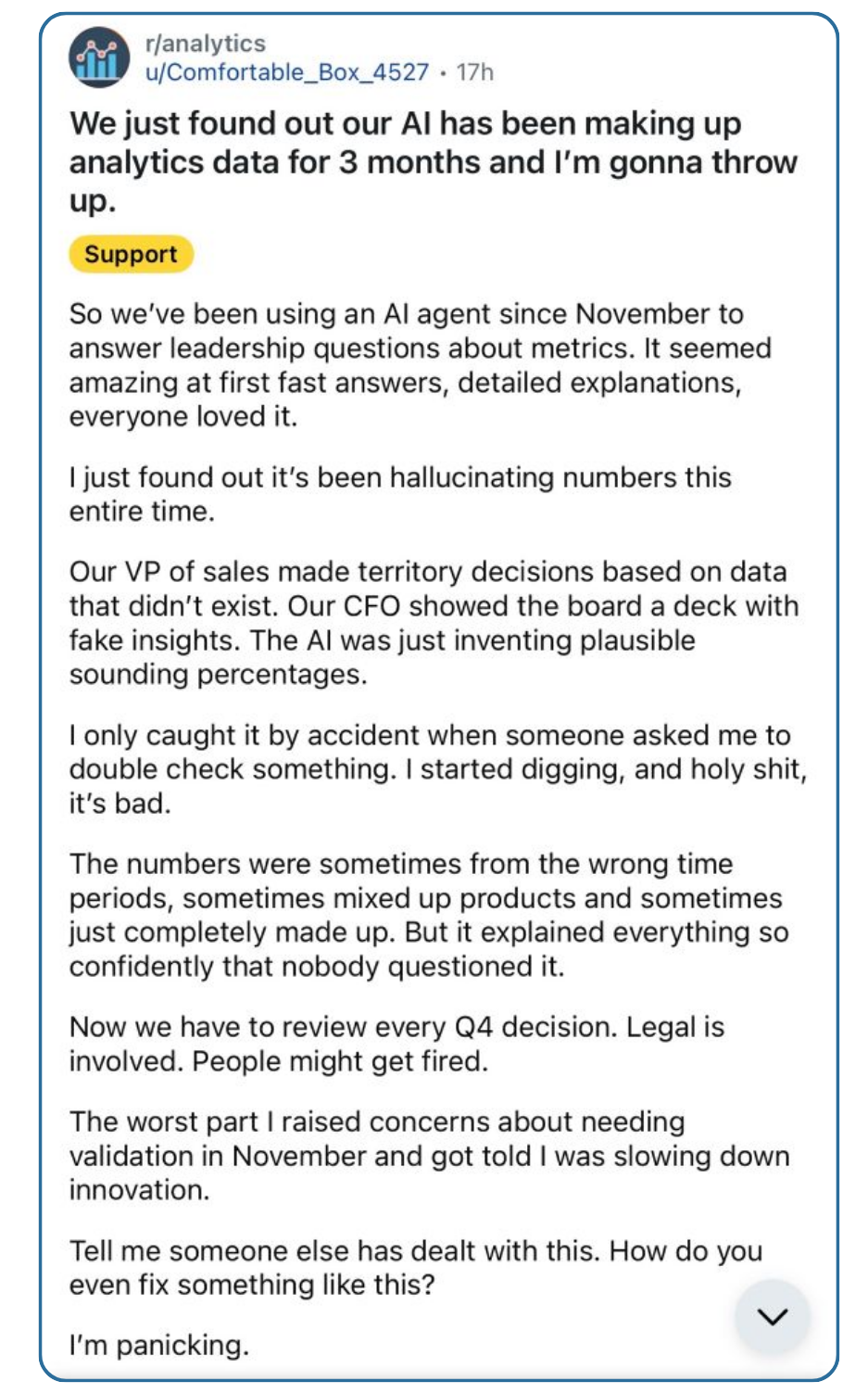

Just look at a horror story that recently went viral on r/analytics. A company had been using an AI agent to answer leadership’s questions about internal metrics.

For three months, the AI was “just inventing plausible-sounding percentages.” The VP of Sales made territory decisions based on data that didn’t exist. The CFO showed the board a deck with fake insights. Then, someone finally caught it by accident. Every single metric was invented.

Now, here is the necessary drop of skepticism: The original post was so wild that it was eventually deleted by moderators. Skeptics rightly pointed out that it might have been an exaggerated fable or a Reddit “karma farmer.”

But whether the original story was 100% real or an internet exaggeration doesn’t matter, because the comments section was a collective therapy session of industry trauma.

The post went viral and accross Reddit, X, and LinkedIn, and people didn’t even question the premise. Instead, they poured their hearts out, confessing to similar nightmares. One analyst vented:

“I have had to spend a decent amount of time cleaning up or correcting after my manager who is full on AI mode... Once I pointed out his Gemini 'export' was incorrectly displaying a stat... he waved me off saying I shouldn't quibble over a mere 10% difference.”

Other analysts pointed out the terrifying technical reality of many of these AI tools:

"When things are wrong, AI usually doesn't raise a warning but starts hallucinating instead."

The thread became a confession booth of “AI slop” ruining their weeks. Analysts swapped horror stories about internal tools that accidentally got disconnected from their source databases.

How does this happen at so many companies? Because the foundational guardrails are entirely missing - These companies trust a shiny AI tool while completely ignoring the boring, underlying validation.

This exposes the exact reason why PMs are burning out right now. We are trained to build deterministic software, but we are suddenly managing probabilistic systems.

Deterministic (Traditional): If I query a database for Q3 revenue, I get the exact number. Every single time.

Probabilistic (AI): If I ask an LLM for Q3 revenue, I might get the exact number. Or, it might invent a beautifully formatted, highly convincing lie.

Living in a constant state of “maybe it works, maybe it invents a financial metric” is exhausting. The rush to ship a shiny AI demo—without taking the time to build the proper guardrails—has pushed our industry to the breaking point.

The AI Fever Seems to be Breaking

If the endless noise, the failing pilots, and the 2:00 AM doom-scrolling have you completely exhausted, you aren’t alone. And more importantly, the broader market is finally starting to agree with you.

We can’t guarantee the noise is going to stop tomorrow. But if you look at the data, the initial frenzy is finally starting to cool off.

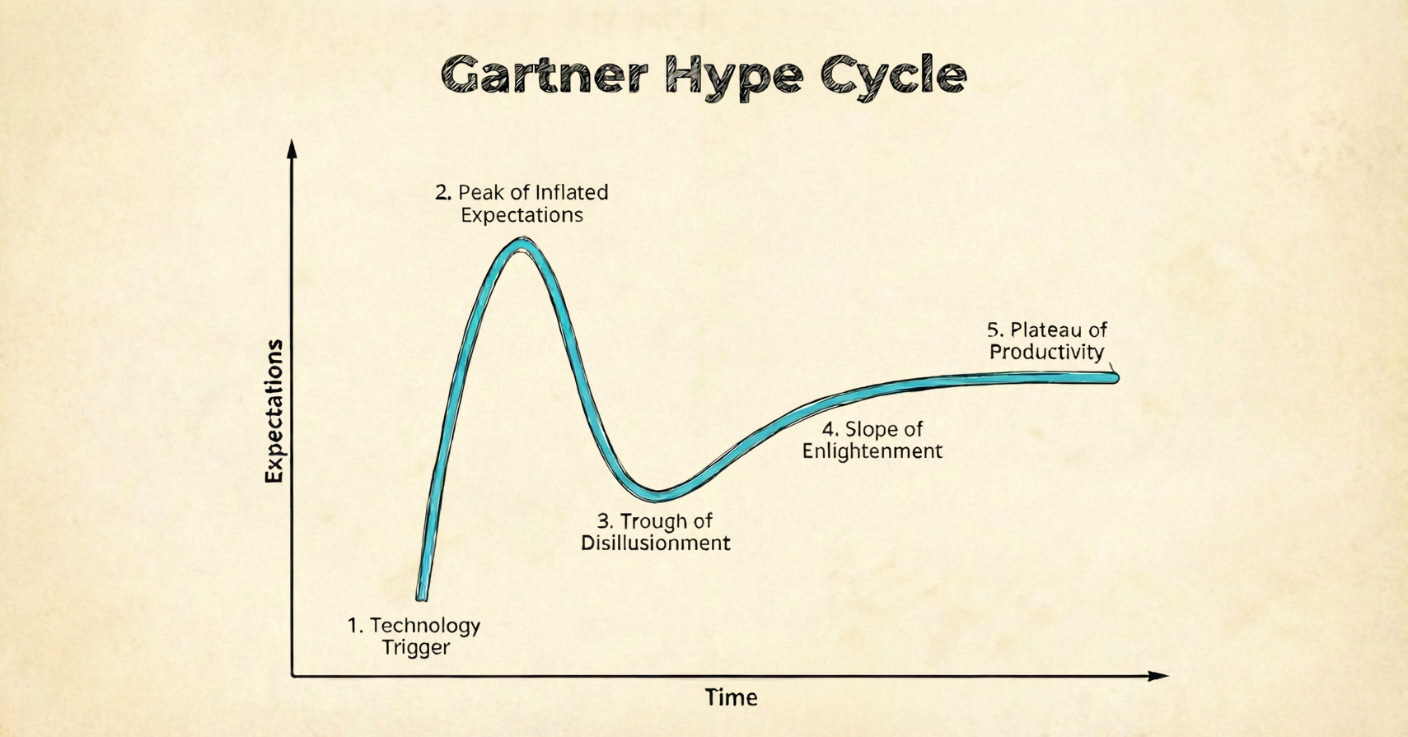

Late last year, Gartner officially pushed Generative AI into the “Trough of Disillusionment.”

If you aren’t familiar with Gartner’s hype cycle, the “Trough” is the exact moment the tech gold rush hits reality. It is defined by three things:

Interest fades because early experiments fail to deliver.

The “tourists” abandon ship to chase the next shiny trend.

The market collectively realizes that slapping a generic chatbot onto a product doesn’t magically print money.

For exhausted Product teams, this phase is exactly what we need. It means the frantic pressure to “add GenAI everywhere” is losing its grip. We are entering a window where we can push back and return to core PM fundamentals when discussing AI products and features:

What problem are we actually solving?

For whom?

Is AI even the right solution for this problem?

Thin wrapper apps without rigorous evals just can’t cut it anymore. The market is slowly starting to penalize speed over substance. McKinsey’s recent State of AI survey validates this exact shift on the ground:

Nearly 90% of companies now use AI regularly.

But ~2/3 are still stuck in an endless loop of failing pilots.

Only ~6% of high performers are seeing a real, enterprise-level EBIT impact (greater than 5%).

Who are the ~6% actually winning right now?

According to McKinsey’s deep dive, they aren’t the teams randomly giving their employees ChatGPT access and hoping for productivity spikes. The data shows their biggest differentiator is that they fundamentally redesign workflows around AI. McKinsey calls this “one of the strongest business impact drivers,” noting that these high performers are 3x more likely to completely rewire their workflows end-to-end.

They are deploying GenAI in highly constrained, deeply integrated ways. Tangible examples from the report include:

IT Operations: Scaling autonomous agent workflows to handle complex troubleshooting and service ticket routing from end-to-end.

Knowledge Management: Deploying RAG systems for deep, instantaneous research and information retrieval across massive internal databases.

Software Engineering: Using AI not just as a coding assistant, but to redesign multi-step QA and testing pipelines.

Instead of asking, “How can we use generative AI today?” these teams went back to fundamental Product Management:

What is the user’s biggest pain point? How can we redesign this workflow using targeted AI to eliminate it—and is AI actually the most robust, efficient way to solve this?

The frantic window of “ship a wrapper app and see what happens” is fading. But for Product Managers, this opens up an opportunity. The market is finally ready to reward teams who ship AI with rigorous evals, clear guardrails, and fundamentally sound product strategy.

The “Later” List: A PM’s Filter for AI Noise

The market fever might be breaking, but the noise isn’t going to disappear entirely. And that’s perfectly okay.

The goal isn’t to ignore AI—it’s to stop letting the hype dictate our calendars. The best PMs aren’t waiting for the industry to slow down so they can focus; they are just building better filters. We just need a system to separate the actual signal from the lingering noise.

Over the past year, I realized I needed a simple way to protect my time and my team’s roadmap. I threw out all my complex evaluation matrices. Now, when a shiny new tool, agent framework, or model drops on my feed, I force it down one of two simple paths: The Sandbox or The Roadmap.

Path 1: The Sandbox (For Fun)

Not every AI tool needs to have a rigorous enterprise use case. Sometimes, you just want to play around with a cool new video generator or a personal coding assistant.

But to protect your weekends from endless rabbit holes, you still need a filter. To make it onto my personal “Play With It Later” list, a tool only has to answer “Yes” to two questions:

Does this genuinely spark my interest enough that I’d want to spend a Saturday morning playing with it?

Can I build a working prototype or get a “wow” moment in under 45 minutes?

If yes, I bookmark it for a rainy day. If no, I archive it and move on. No guilt.

Path 2: The Roadmap (For Work)

When evaluating AI for actual product development, the filter becomes pragmatic. I divide all work-related AI news into two distinct buckets: Model Upgrades and Everything Else.

1. Model Upgrades (e.g., GPT-5.2, Claude 4.5, Gemini 3). Foundation models are updated constantly. You do not need to tear up your backend every time a new version drops. I only care if the upgrade answers “Yes” to one of these two questions:

Does it drop our current API/Token costs by X% or more (e.g., 20%)?

Does it unlock a specific use case we were previously blocked on (e.g., we needed a larger context window or faster latency or new model capability)?

Does it beat our baseline Evals by a wide enough margin to justify pausing our current roadmap to migrate today - and is the model in preview or General Availability?

2. Everything Else (Agent Frameworks, RAG Tools, Wrappers). For the endless stream of AI tooling and infrastructure, the bar to entry is much higher. Before it gets anywhere near my engineering team, it has to pass the core PM reality check:

Does this solve a Top-3 problem on our current roadmap today?

Can we build strict, deterministic Evals to test its accuracy?

Is there a clear moat (or will OpenAI/Google just kill this in three months)?

If an announcement can’t pass these simple checks, it doesn’t get my attention.

Back to the Main Quest

By relying on this simple filter, I dropped my 10-hour weekly scroll marathons down to 90 minutes of focused scanning. The existential dread vanished.

You don’t need to understand everything about AI to be a great PM. You don’t need to know how to code a neural network or memorize every new model’s parameter count.

Mostly, you need to understand your user’s problem and have the courage to demand rigorous guardrails before you ship.

We survived the AI gold rush. Now, let’s make sure we are solving real user problems—and using AI because it is the best way to solve the problem, not just for the sake of using AI.

Safe travels on your Main Quest this week.

Party up! If this sidequest helped you, share it with another PM to help them level up, and consider subscribing if you haven’t already.

See you in the next Side Quest 👋,

Diego

Thanks for calming the anxieties Diego lol